25. 02. 2009 Worker Node to Fileserver write throughput

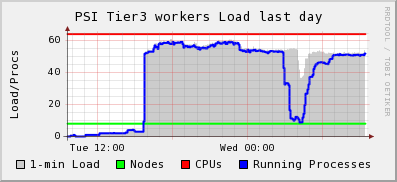

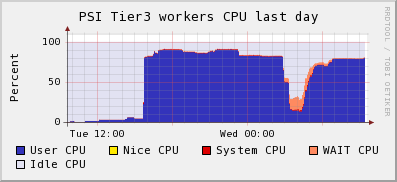

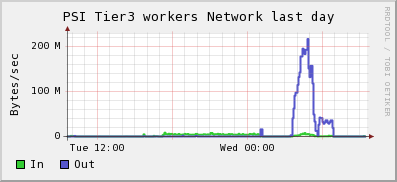

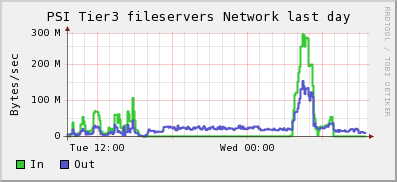

We currently have three Thumpers active in dcache. T. Punz had a number of jobs running which showed some I/O wait when writing in parallel to the dCache. The max write throughput was around 300 MByte/s =~ 2.5 Gbit/s, and generated by ca. 25 gridftp connections (we do not know the number of streams). The SE showed a symmetric load of 30 MByte/s read/write from the worker nodes plus 20-30 MByte/s read from the UI (dcap, ca. 4 processes). 6 worker nodes wrote with ca. 40 MByte/s =~ 335 Mbit/s, 2 with ca. 25 MByte/s. All showed some I/O wait over the period of the intensive writing, and little CPU usage. Based on the load graphs for the machines, it seems that each machine ran 6 to 8 of these jobs. -- DerekFeichtinger - 25 Feb 2009 * wn-cpu-day-2009020935.png:

* wn-cpu-day-2009020935.png:  * wn_netw-day-2009020935.png:

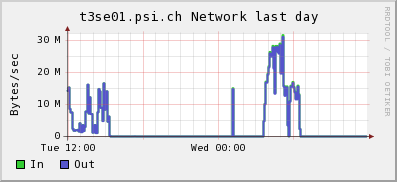

* wn_netw-day-2009020935.png:  * fs-netw-day-2009020935.png:

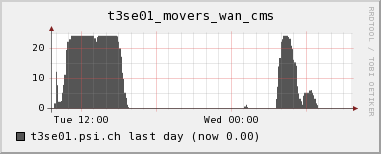

* fs-netw-day-2009020935.png:  * wan-movers-day-2009020935.png:

* wan-movers-day-2009020935.png:  * se-netw.day-20090225.png:

* se-netw.day-20090225.png:

13. 05. 2009 PhEDEx download throughput example

Error message statistics per site:

===================================

*** ERRORS from T1_DE_FZK_Buffer:***

1 copy did not complete or status unknown

*** ERRORS from T2_CH_CSCS:***

2 copy of [srm-URL] into [srm-URL] failed, status = SRM_FAILURE explanation=FAILED: at [date] state Failed : retrieval of "from" TURL failed with error java.lang.ArrayIndexOutOfBoundsException: 2

SITE STATISTICS:

==================

first entry: 2009-05-12 19:45:03 last entry: 2009-05-13 07:20:29

T1_DE_FZK_Buffer (OK: 105 Err: 1 Exp: 0 Canc: 0 Lost: 0) succ.: 99.1 % total: 133.8 GB ( 3.2 MB/s)

T2_CH_CSCS (OK: 830 Err: 2 Exp: 0 Canc: 0 Lost: 0) succ.: 99.8 % total: 1992.9 GB (47.8 MB/s)

TOTAL SUMMARY:

==================

first entry: 2009-05-12 19:45:03 last entry: 2009-05-13 07:20:29

total transferred: 1980.7 GB in 11.6 hours

avg. total rate: 48.6 MB/s = 388.9 Mb/s = 4101.3 GB/day

| I |

Attachment | History | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|---|

| |

fs-netw-day-2009020935.png | r1 | manage | 17.5 K | 2009-02-25 - 10:46 | DerekFeichtinger | |

| |

se-netw.day-20090225.png | r1 | manage | 15.8 K | 2009-02-25 - 10:56 | DerekFeichtinger | |

| |

wan-movers-day-2009020935.png | r1 | manage | 10.6 K | 2009-02-25 - 10:46 | DerekFeichtinger | |

| |

wn-cpu-day-2009020935.png | r1 | manage | 13.8 K | 2009-02-25 - 10:45 | DerekFeichtinger | |

| |

wn-load-day-2009020935.png | r1 | manage | 16.6 K | 2009-02-25 - 10:45 | DerekFeichtinger | |

| |

wn_netw-day-2009020935.png | r1 | manage | 13.9 K | 2009-02-25 - 10:45 | DerekFeichtinger |

This topic: CmsTier3 > WebHome > CMSTier3Log > CMSTier3Log5

Topic revision: r2 - 2009-05-13 - DerekFeichtinger

Ideas, requests, problems regarding TWiki? Send feedback