Service Card for Argus

Short description about the service.Definition

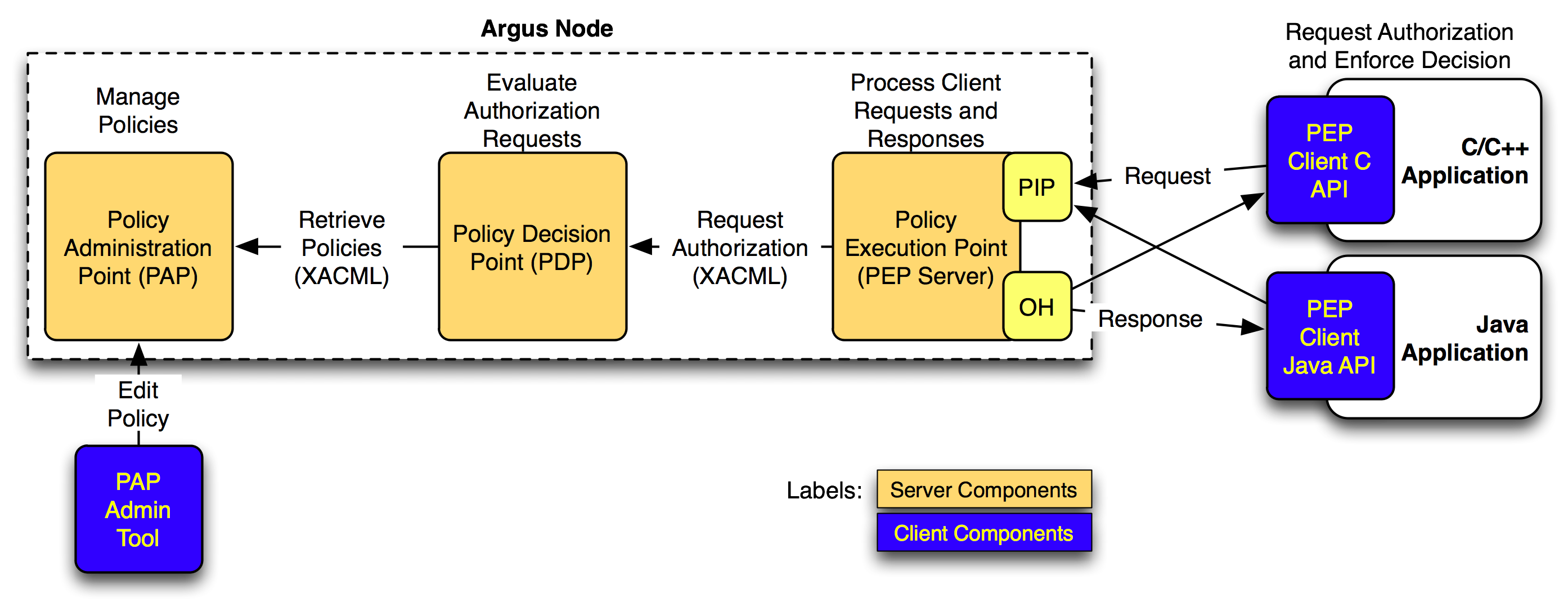

Argus is a system meant to render consistent authorization decisions for distributed services (e.g. compute elements, portals). In order to achieve this consistency a number of points must be addressed. First, it must be possible to author and maintain consistent authorization policies. This is handled by the Policy Administration Point (PAP) component in the service. Second, authored policies must be evaluated in a consistent manner, a task performed by the Policy Decision Point (PDP). Finally, the data provided for evaluation against policies must be consistent (in form and definition) and this is done by the Policy Enforcement Point (PEP). The following graphic shows the interaction between the components of the service: This service plays a key role when dealing with the gLExec binary running in all WNs. gLExec is a program that acts as a light-weight 'gatekeeper' using Grid credentials as input and taking the local site policy into account to authenticate and authorize the credentials. gLExec will switch to a new execution sandbox and execute the given command as the switched identity.

It is strongly recommended to check out the following slides: argus-policies-in-action.pdf: Argus policies in action (EGI TF 2011 presentation by Andrea Ceccanti)

This service plays a key role when dealing with the gLExec binary running in all WNs. gLExec is a program that acts as a light-weight 'gatekeeper' using Grid credentials as input and taking the local site policy into account to authenticate and authorize the credentials. gLExec will switch to a new execution sandbox and execute the given command as the switched identity.

It is strongly recommended to check out the following slides: argus-policies-in-action.pdf: Argus policies in action (EGI TF 2011 presentation by Andrea Ceccanti)

Operations

When dealing with the service, it is important to make sure that the three services (argus-pap, argus-pdp and argus-pepd) are up and running. Additionally after every policy modification, it is required to reload policies using the commands shown in this TWiki page. It is important to take into account that policies modification must be done exclusively in argus01 and then, either refresh the remote argus01_pap by hand or wait 10min for it to refresh automaticaly on argus02.Client tools

Using the pap administration console it is possible to control the behaviour of a live Argus service as well as tunning the settings for next time the service reboots. It is located in/usr/bin/pap-admin and can be managed as root from each of the argus servers or from any UI, logged as an user with the following certificates loaded:

"/DC=com/DC=quovadisglobal/DC=grid/DC=switch/DC=hosts/C=CH/ST=Zuerich/L=Zuerich/O=ETH Zuerich/CN=argus01.lcg.cscs.ch" : ALL "/DC=com/DC=quovadisglobal/DC=grid/DC=switch/DC=hosts/C=CH/ST=Zuerich/L=Zuerich/O=ETH Zuerich/CN=argus02.lcg.cscs.ch" : ALL "/DC=com/DC=quovadisglobal/DC=grid/DC=switch/DC=users/C=CH/O=ETH Zuerich/CN=Miguel Angel Gila Arrondo" : ALL "/DC=com/DC=quovadisglobal/DC=grid/DC=switch/DC=users/C=CH/O=ETH Zuerich/CN=Pablo Fernandez" : ALLThis configuration is maintained in

/etc/argus/pap/pap_authorization.ini and after change requires a restart of the pap daemon.

Testing

- Testing the status of the services:

$ grid-service status Running: grid-service status /etc/grid-security/gridmapdir mounted and readable /opt/edg/var/info mounted and readable PAP running! service: Argus PDP version 1.3.0 start_time: 1305556860865 number_of_processors: 6 memory_usage: 137MB total_requests: 5 total_completed_requests: 5 total_request_errors: 0 policy_load_instant: 1306752061121 current_policy: root-default-85a15c9c-ef6f-40d9-bbbf-6932d64c3ef9 current_policy_version: 1 service: Argus PEP Server version 1.3.0 start_time: 1305556222700 number_of_processors: 6 memory_usage: 160MB total_requests: 7 total_completed_requests: 7 total_request_errors: 0

- This can be used to send any query to an argus server. From a UI with the certificate loaded, run this to test glexec:

$ export LD_PRELOAD=/opt/glite/lib64/libpep-c.so.1 && \ /opt/glite/bin/pepcli --verbose --debug \ --pepd https://argus02.lcg.cscs.ch:8154/authz \ --resourceid "http://authz-interop.org/xacml/resource/resource-type/wn" \ --actionid "http://glite.org/xacml/action/execute" \ --capath /etc/grid-security/certificates/ \ --cert $X509_USER_PROXY \ --key $X509_USER_PROXY \ --certchain $X509_USER_PROXY

- This can be used to send any query to an argus server. From a UI with the certificate loaded, run this to test CREAM-CE:

/opt/glite/bin/pepcli --verbose \ --debug --pepd https://argus02.lcg.cscs.ch:8154/authz \ --resourceid "http://lcg.cscs.ch/xacml/resource/resource-type/creamce" \ --actionid "http://glite.org/xacml/action/execute" \ --capath /etc/grid-security/certificates/ \ --cert $X509_USER_PROXY \ --key $X509_USER_PROXY \ --certchain $X509_USER_PROXY

- This can be used to test GLEXEC from a running WN. Considering that our user certificate is loaded, valid and located in /tmp/test_proxy , we can run this:

$ export GLEXEC_CLIENT_CERT=/tmp/test_proxy $ export GLEXEC_SOURCE_PROXY=/tmp/test_proxy $ export X509_USER_PROXY=/tmp/test_proxy $ /opt/glite/sbin/glexec /usr/bin/id uid=15176(dteam174) gid=2688(dteam)

- Listing the policies in all the paps, local and remote:

$ pap-admin lp -all centralbanning (argus.cern.ch:8150): No policies has been found. default (local): resource "http://authz-interop.org/xacml/resource/resource-type/wn" { obligation "http://glite.org/xacml/obligation/local-environment-map" { } action "http://glite.org/xacml/action/execute" { rule permit { vo="dteam" } rule permit { pfqan="/atlas/Role=pilot" } rule permit { pfqan="/ops/Role=pilot" } } }

- Listing the paps installed

$ pap-admin lpaps alias = centralbanning (remote, enabled, private, https://argus.cern.ch:8150/pap/services/) alias = default (local, enabled, public)

- When making changes in policies, we need to reload the policies and clear the cache. That can be done with this command

$ grid-service reload

Failover check

Just make sure that both machines argus01 and argus02 are reachable from all the WNs.Checking logs

All the Argus service logs are under /var/log/argus and are the following:$ find /var/log/argus/ -type f /var/log/argus/pdp/access.log /var/log/argus/pdp/audit.log /var/log/argus/pdp/process.log /var/log/argus/pepd/access.log /var/log/argus/pepd/audit.log /var/log/argus/pepd/process.log /var/log/argus/pap/pap-standalone.log

Set up

Although the configuration of this service is generated by YAIM, it's worth noting that the config files are located in /etc/argus and are the following:$ find /etc/argus/ -type f ! -name '*yaim*' ! -name '*rpmnew*' /etc/argus/pdp/logging.xml /etc/argus/pdp/pdp.ini /etc/argus/pepd/logging.xml /etc/argus/pepd/pepd.ini /etc/argus/pap/pap-admin.properties /etc/argus/pap/pap_configuration.ini /etc/argus/pap/attribute-mappings.ini /etc/argus/pap/pap_authorization.ini /etc/argus/pap/logging/client/logback.xml /etc/argus/pap/logging/standalone/logback.xmlIt is interesting to note as well, that the logging level is set in the XML files.

Dependencies (other services, mount points, ...)

Like most of other grid services, it depends on mounting the following in order to maintain consistency between CREAM, LRMS and this service.nfs:/shared/gridmapdir /etc/grid-security/gridmapdir nfs bg,rw,proto=tcp,rsize=32768,wsize=32768,soft,intr,nfsvers=3 0 0 nfs:/shared/vo_tags /opt/edg/var/info nfs bg,rw,proto=tcp,rsize=32768,wsize=32768,soft,intr,nfsvers=3 0 0

Redundancy notes

The ARGUS service is configured in a semi-redundant way withargus01 acting as main Authorization Service and argus02 as backup or secondary. It works this way: - Worker Nodes will query

argus01and then, if no answer is received, then they will queryargus02 - The PAP policies in both argus servers are similar and they're applied in this order:

- centralbanning is a remote policy maintained by CERN and refreshed every 10min. It is active in both servers.

- default is the default local policy maintained by us.

argus01has a completedefaultpolicy, while the one inargus02is empty. - argus01_pap is a remote policy that

argus02imports fromargus01. It gets refreshed every 10min.

Installation

In the CERN TWiki

- Let's make sure that the EGI trustanchors,

fetch-crland the following packages are installed in the system:$ yum install ca-policy-egi-core fetch-crl yum-priorities yum-protectbase --enablerepo=cscs,epel $ service fetch-crl-cron start $ chkconfig fetch-crl-cron on

- only EMI EA Now let's make sure that the

emi-releasepackage with all the info regarding the repositories is also installed:$ yum install emi-release --enablerepo=cscs

- only UMD Let's make sure that the correct repos are installed:

$ ls /etc/yum.repos.d/UMD* /etc/yum.repos.d/epel.repo /etc/yum.repos.d/UMD-1-base.repo /etc/yum.repos.d/UMD-1-updates.repo /etc/yum.repos.d/epel.repo

- The

emi-arguspackage must be installed:- If we are installing it from scratch, we must run

$ yum install emi-argus

- If we are installing on a already installed machine with the old RC4 packages, this must be executed instead

$ yum makecache $ yum update emi-argus

- If we are installing it from scratch, we must run

- At this point, the Argus PAP, PDP and PEP servers are installed in the system, now they must be configured using YAIM.

$ /opt/glite/yaim/bin/yaim -c -s /opt/cscs/siteinfo/site-info.def -n ARGUS_server

In the CERN TWiki we can find the YAIM configuration variables.

we can find the YAIM configuration variables.

- Since we modify the file

/etc/argus/pap/pap_authorization.inito add the subject of our certificates as administrators, now we need to run cfengine.$ cfagent -q $ grid-service restart $ grid-service reload

Policies setup

- [argus01 + argus02] First of all, the

centralbannigpolicy must be imported in both servers,argus01andargus02. These banning policies are maintained by OSCT / EGI CSIRT from a CERN server.# pap-admin add-pap centralbanning argus.cern.ch "/DC=ch/DC=cern/OU=computers/CN=argus.cern.ch"; pap-admin enable-pap centralbanning; pap-admin set-paps-order centralbanning default; pap-admin refresh-cache centralbanning

- [argus01] Then we need to populate the default policy only in

argus01. This is done by importing the generated policies file into the policies repository:- Download the following script and generate the list of poliices:

$ wget https://wiki.chipp.ch/twiki/pub/LCGTier2/ServiceArgus/from-groupmap-to-policy.sh -O /root/from-groupmap-to-policy.sh $ chmod +x /root/from-groupmap-to-policy.sh $ /root/from-groupmap-to-policy.sh >> /root/localpolicy_fromgroupmap-to-policy

- Add the generated policies to the PAP admin

$ pap-admin apf /root/localpolicy_fromgroupmap-to-policy $ pap-admin lp

- Download the following script and generate the list of poliices:

- [argus02] And now all we have to do is to import the policies set up in

argus01toargus02. From argus02 we need to do this:$ pap-admin add-pap argus01_pap argus01.lcg.cscs.ch "/DC=com/DC=quovadisglobal/DC=grid/DC=switch/DC=hosts/C=CH/ST=Zuerich/L=Zuerich/O=ETH Zuerich/CN=argus01.lcg.cscs.ch" $ pap-admin enable-pap argus01_pap $ pap-admin set-paps-order centralbanning default argus01_pap $ pap-admin refresh-cache argus01_pap

Installing GLExec in the Worker Nodes

As a part of the authorization service, all the worker nodes in which pilot jobs run must be configured to use GLEXEC_wn. This is done by YAIM once the right variables in the site-info.def have been also set.- First, let's make sure that the proper repository is installed. At the moment of writting these notes, there is no EMI specific release of glexec, so we install the GLEXEC_wn from the gLite repository (configured via cfengine).

$ yum install glite-GLEXEC_wn --enablerepo=epel --enablerepo=glite-GLEXEC_wn --enablerepo=dag --enablerepo=glite-GLEXEC_wn_updates

- Now, we have to run the YAIM configuration of the WN with

-n glite-GLEXEC_wnat the end$ /opt/glite/yaim/bin/yaim -c -s /opt/cscs/siteinfo/site-info.def -n WN -n TORQUE_client -n glite-GLEXEC_wn

- Now, run cfagentg

$ cfagent -q

- Finally, restart pbs_mom service

$ service pbs_mom restart

Copy your personal proxy from UI to WN and change ownership of proxy to any pilot account, then log into that account and run: $ export GLEXEC_CLIENT_CERT=/tmp/test_proxy; export GLEXEC_SOURCE_PROXY=/tmp/test_proxy; export X509_USER_PROXY=/tmp/test_proxy; /opt/glite/sbin/glexec /usr/bin/id uid=15176(dteam174) gid=2688(dteam)

Upgrade

- Shutdown the service in ONE argus server (hopefuly

argus02). - Run a packages upgrade

- Run YAIM

- Run cfengine

- Now the service should be up and running as usual.

Monitoring

Instructions about monitoring the serviceNagios

Ganglia

Self Sanity / revival?

Other?

Manuals

- pap-admin cli manual

- GLExec official TWiki: https://www.nikhef.nl/pub/projects/grid/gridwiki/index.php/GLExec

- ARGUS official TWiki: https://twiki.cern.ch/twiki/bin/view/EGEE/AuthorizationFramework

Issues

Information about issues found with this service, and how to deal with them.Issue1

If you get the following message when running a job (glite-ce-job-status -L2):Cannot move ISB (retry_copy ${globus_transfer_cmd} gsiftp://ppcream01.lcg.cscs.ch/var/local_cream_sandbox/dteam/_DC_com_DC_quovadisglobal_DC_grid_DC_switch_DC_users_C_CH_O_ETH_Zuerich_CN_Miguel_Angel_Gila_Arrondo_dteam_Role_NULL_Capability_NULL_dteam010/44/CREAM443806849/ISB/ui64.lcg.cscs.ch-1109131020156690000_remote_command.sh file:///tmpdir_pbs/59.pplrms02.lcg.cscs.ch/CREAM443806849/ui64.lcg.cscs.ch-1109131020156690000_remote_command.sh): error: globus_ftp_client: the server responded with an error530 530-Login incorrect. : globus_gss_assist: Error invoking callout530-globus_callout_module: The callout returned an error530-an unknown error occurred530 End.]

Please, make sure that the TIME AND DATE of all machines involved in the transfer (CREAM, WN, LRMS and, above all ARGUS) are set correctly and the service ntpd set to autostart. If the timestamp of the system is incorrect fetch-crl cannot work well and consequently, all gridftp transfers will fail.

Issue2

- from-groupmap-to-policy.sh: Script to create policies from groupmapdir

- argus-policies-in-action.pdf: Argus policies in action (EGI TF 2011 presentation by Andrea Ceccanti)

| ServiceCardForm | |

|---|---|

| Service name | Argus |

| Machines this service is installed in | argus[01,02] |

| Is Grid service | Yes |

| Depends on the following services | nfs |

| I | Attachment | History | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|---|

| |

argus-policies-in-action.pdf | r1 | manage | 1312.1 K | 2011-09-22 - 12:02 | MiguelGila | Argus policies in action (EGI TF 2011 presentation by Andrea Ceccanti) |

| |

from-groupmap-to-policy.sh | r1 | manage | 0.9 K | 2011-08-31 - 07:11 | MiguelGila | Script to create policies from groupmapdir |

| |

glite-ARGUS_components-v2.png | r1 | manage | 323.1 K | 2011-05-05 - 09:18 | MiguelGila | glite/EMI ARGUS server schema |

This topic: LCGTier2 > WebHome > ServiceInformation > ServiceCards > ServiceArgus

Topic revision: r23 - 2012-02-01 - MiguelGila

Ideas, requests, problems regarding TWiki? Send feedback