CMS Site Log for PHOENIX Cluster

10. 2. 2007 Cleaning of data sets from SE

I cleaned several data sets from our DPM, since no more space was left (one shared pool from LHCb which we had opened to all had never been used. Seems to be a DPM problem). A number of production jobs had failed in writing to our storage, but the problem was only detected when the production team ran the merge jobs. The files exist in the nameserver of DPM, but there are no connected SFNs for them. They can be detected easily, since they show up as files with 0 bytes file length on commands likeedg-gridftp-ls --verbose or rfdir.

Gave this feedback to Alexander Flossdorf from DESY.

12. 2. 2007 Test of PhEDEx 2_5_0_1

Tested Dev instance of new PhEDEx distribution. Subscribed to/phedex/monarctest_CERN/1 data set The files were

routed from PIC and the FTS at FZK seemed to work fine.

After half of the files had completed, there was again the typical error for many transfers.

Failed Transfer failed. ERROR the server sent an error response: 425 425 Can't open data connection. timed out() failed.The PhEDEx monitoring page showed, that T1_PIC transfers to a whole number of other sites also were problematic. But I found for every other site a different error.

Since we are currently busy getting the new dCache SE up and our old DPM SE has not much space available, I just started the PhEDEx Prod instance, but did not subscribe to new data right now.

Since we are currently busy getting the new dCache SE up and our old DPM SE has not much space available, I just started the PhEDEx Prod instance, but did not subscribe to new data right now.

20. 2. 2007 started Cycle-1 / Week-2 exercise

Following the plan laid out in Daniele Bonacorsi's pages

21./22. 2. 2007 myproxy renewal problem / no source files from IN2P3

Due to a myproxy renewal problem on our side the transfers of last night failed. There have been multiple attempts to transfer from FZK. All the following routings picked IN2P3 as a source, even though the requested files had already been erased there (so there was an inconsistency in TMDB and what the center had on disk). The problem was corrected the following day. I cancelled all subscriptions in the Dev instance and subscribed to a new set of monarc test files in the Prod instance. The data sets were:- /phedex_prod/monarctest_CERN/6

- /phedex_prod/monarctest_CERN/7

- /phedex_prod/monarctest_CERN/8

In the evening transfers from FZK began to kick in with reasonable efficiency:

23. 2. 2007 Good transfers from FZK

Finally very good throughput from FZK (considering that it still only goes to a single file server on our site!). The three test sets were completed around noon, so ~1.5 TB in 2 days with some routing problems.

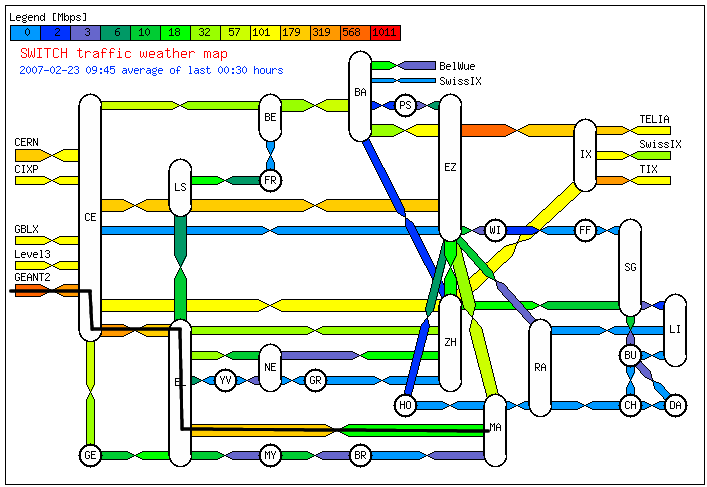

Based on the SWITCH traffic weather map

Based on the SWITCH traffic weather maptraceroute to f01-110-106-e.gridka.de (192.108.45.151), 30 hops max, 38 byte packets 1 148.187.33.2 (148.187.33.2) 0.877 ms 0.438 ms 0.732 ms 2 148.187.32.4 (148.187.32.4) 0.728 ms 0.480 ms 0.770 ms 3 swima2.cscs.ch (148.187.20.2) 0.444 ms 0.697 ms 0.957 ms 4 swiEL2-10GE-1-4.switch.ch (130.59.37.77) 3.938 ms 3.420 ms 3.728 ms 5 swiCE3-10GE-1-3.switch.ch (130.59.37.65) 4.675 ms 4.520 ms 4.723 ms 6 swiCE2-10GE-1-4.switch.ch (130.59.36.209) 4.111 ms 4.759 ms 4.478 ms 7 switch.rt1.gen.ch.geant2.net (62.40.124.21) 4.525 ms 4.710 ms 4.371 ms 8 so-7-2-0.rt1.fra.de.geant2.net (62.40.112.22) 12.588 ms 12.851 ms 13.050 ms 9 dfn-gw.rt1.fra.de.geant2.net (62.40.124.34) 12.592 ms 19.913 ms 12.552 ms 10 xr-fzk1-te2-3.x-win.dfn.de (188.1.145.50) 15.632 ms 15.776 ms 15.342 ms 11 kr-fzk.x-win.dfn.de (188.1.38.222) 16.896 ms 16.719 ms 15.432 ms 12 * * * 13 f01-110-106-e.gridka.de (192.108.45.151) 17.772 ms 19.327 ms 20.521 ms

27. 2. 2007 Test with FZK MONARC sample

Did a further test with FZK using a MONARC sample that had been prepared there:/phedex_monarctest/FZK-DISK1/MonarcTest_FZK-DISK1.

Transfers started with high throughput ranging from 20-40 MB/s, but towards the end of the data set, the famous = 425 425 Can't open data connection= error repeatedly caused failure for one particular file (but the error was not connected to the file. Other sites had the same problem

with other files). Based on a message from Doris Ressmann from FZK, these seem to be timeout issues due to overload of their dCache gridftp doors.

This topic: LCGTier2 > WebHome > CMSInfoPages > CMSSiteLog > CMSSiteLog4

Topic revision: r12 - 2007-03-14 - DerekFeichtinger

Ideas, requests, problems regarding TWiki? Send feedback